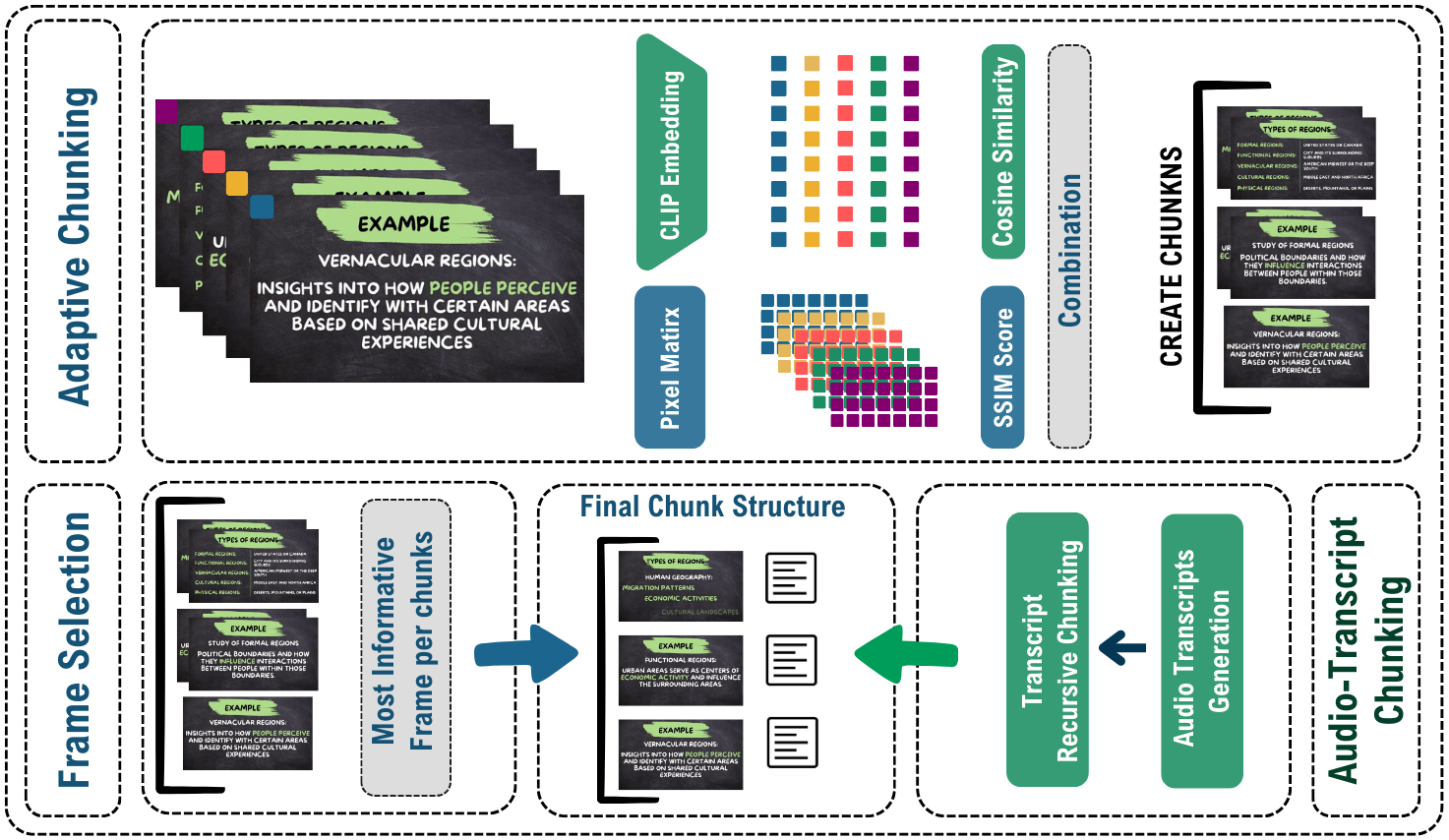

Extending retrieval‑augmented generation to the video domain poses new challenges: long videos contain redundant frames yet critical events can be sparsely distributed. In this work we propose adaptive chunking, a method that segments and encodes long videos into variable‑length, semantically meaningful units before retrieval. By analysing visual changes and scene boundaries, the pipeline creates chunks that preserve important context while discarding redundancy.

Using a bilingual educational video dataset, we build a VideoRAG pipeline where a vision‑language model summarises each chunk and a language model synthesises answers to questions. Comparative experiments show that adaptive chunking yields higher accuracy on bilingual educational video QA than fixed‑length chunking strategies. This demonstrates the promise of intelligent segmentation for video retrieval‑augmented generation.