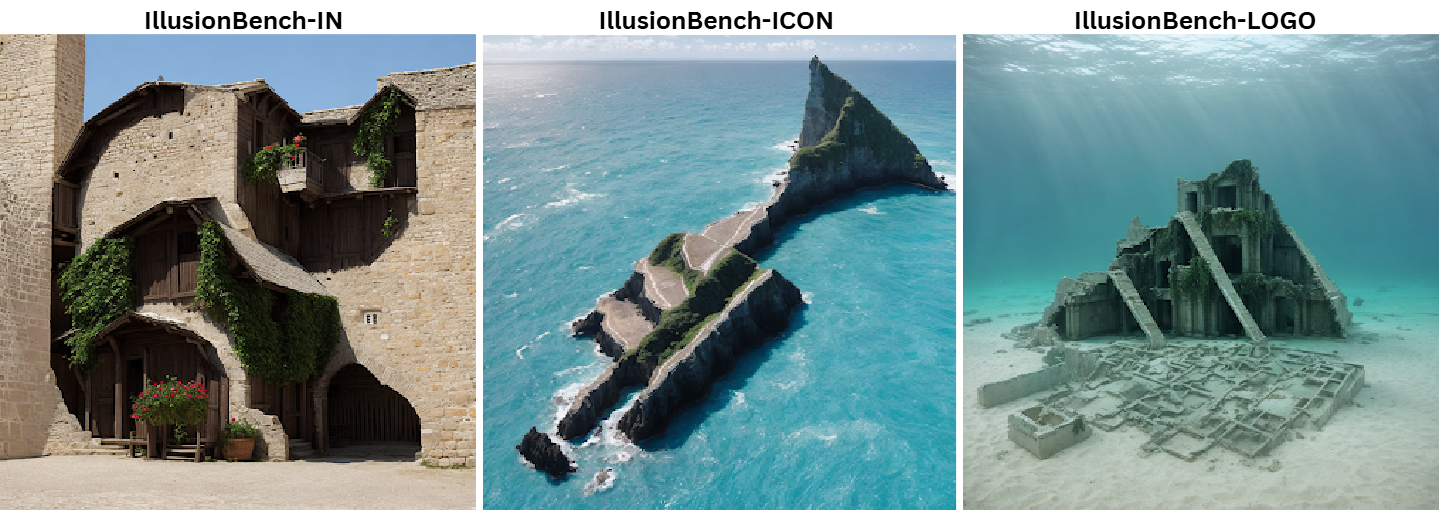

This paper introduces IllusionBench, a family of three datasets designed to test whether vision‑language models truly recognise shapes when those shapes are formed by arranging everyday objects within a scene. By conditioning diffusion models on binary masks, the authors hide letters, faces and animals inside complex environments. Humans find these tasks trivial, achieving near‑perfect accuracy, whereas state‑of‑the‑art models score below 40 % zero‑shot, revealing substantial robustness gaps and highlighting the need for improved multi‑modal perception.

Extensive experiments evaluate GPT‑4, Gemini, Llava and other vision‑language models under zero‑shot and few‑shot settings. The results demonstrate that current models often rely on superficial cues rather than developing gestalt perception. IllusionBench is therefore proposed as an open benchmark to audit and drive progress in abstract shape recognition.