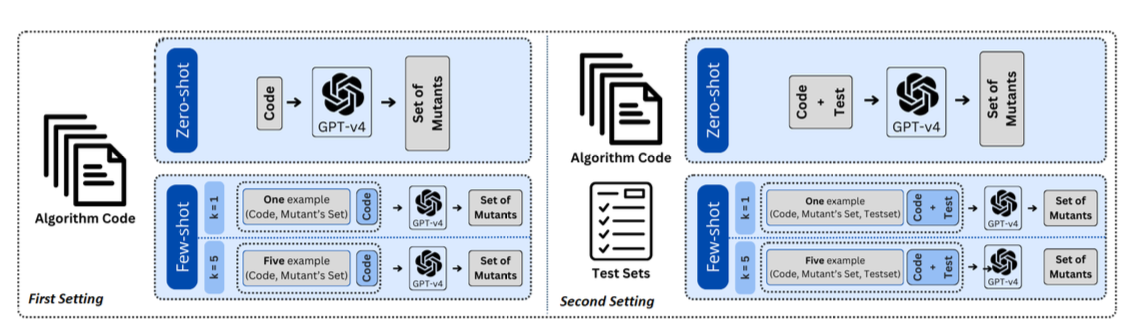

Mutation testing measures the quality of a software test suite by introducing small modifications—mutations—to the program and checking whether the tests detect the changes. This paper explores using GPT‑v4 to automate mutation testing in zero‑ and few‑shot settings. Instead of training a specialised model, we prompt GPT‑4 with mutated and original code snippets and ask it to predict whether the mutation alters the program’s behaviour.

The proposed pipeline analyses GPT‑4’s responses to rank mutants by their likelihood of escaping detection and correlates model confidence with mutation difficulty. Experiments suggest that large language models can assist software quality assurance by highlighting weak spots in test suites, prioritising test cases and providing an accessible baseline before investing in more computationally intensive mutation analyses.